Esri Developer Summit 2012 Insights: Day Two

March 29, 2012 Leave a comment

Dan Levine

Steve Riley of Riverbed Technology gave the Keynote Speech today. He spoke about how the Cloud isn’t anything like we think it is and proceeded to tell us why. One of the points he made helped solidify some of my thoughts from yesterday. He was talking about how a system administrator will no longer be unpacking boxes and plugging in power and network cables; he will be doing it with a line of code in a scripting language. The System Administrators of the world are going to become programmers, at least if they want to keep working. I think we all will be adapting how and what we do as we implement solutions with these new technologies.

I spent the middle of the day in the RunTime SDK for iOS sessions. Though I don’t know Objective C at all, the team did a great job of walking through a pretty deep application in a way that I could appreciate and that the developers in the room got some value from. They spent a good bit of time describing the modular design patterns they employed, and as a bonus they spent a good bit of time talking about the Apple User Interface design guides and showed how they implemented these in the application.

I went to see the only session on the ArcGIS for MS Office talk by Art Hadaad and his team. They had shown this solution two years ago as an early prototype, but pulled it last year. Well it has risen again and is currently in beta with release of v 1.0 due out right before the User Conference this summer. Art showed the slick integration of the map control in Excel (as simple as making a chart) and PowerPoint. The solution task spreadsheet takes data of just about any form and geocodes it against services running on ArcGIS Online, and returns a map. You can add basemaps and other services from ArcGIS Online or Portal if you have it. Furthermore, once the map is created and in Excel, you have some modest but nice controls to do some different renderings, and the map navigation is fully functional. If you like what you see, click a button and create a slide in a PowerPoint. The map in the slide can be static or dynamic, meaning it goes full screen and fully interactive. Once you have created your map in Excel, you can publish it, but to ArcGIS Online or your Portal. This is going to put authoring and publishing map services in the hands of everyone in an organization. Again, this is changing what and how we do work.

Finally, Dodge Ball capped off the day. Our team, Gone in 60 Seconds, lasted 3 rounds this year. It was a great team effort.

Chris Bupp

Day 2 started with a Keynote speaker whose topic was “the cloud”. He talked about security in the cloud, and did a good job driving home the point that the cloud is secure. (Every talk so far has mentioned the cloud and demonstrated how to integrate it with ArcGIS Online)

The first two tech sessions I went to were “Building Applications with ArcGIS Runtime SDK for iOS, part 1 and 2. I still think that Objective-C “looks weird”, but I have less reason to argue against native apps now. The SDK supports local basemaps, web maps on ArcGIS Online, and going offline. They still need to roll their own offline editing structure, but they promised that disconnected editing will be supported in the Q4 release. Also, they’ll be posting the source to the slick looking demo app next week to the resource center, so make sure to check it out.

The third tech session was “Software Development and Design Using MVVM”. It was focused entirely in WPF, but I could see using the principles used in other languages, and even in other patterns. Jeff Jackson, the presenter, did a great job demonstrating testability and maintainability even with a simple app. He started off creating a simple WPF app, and then converting it to MVVM.

The last tech session was “Using Geoprocessing Services with ArcGIS Web Mapping APIs”. The geoprocessing services were published to ArcGIS Online from ArcMap, and then accessed via URL calls. The new features include the ability to create geoprocessing services that (if enabled) will automatically create temporary map layer feature services, which is great for large datasets and raster outputs. Another feature that impressed me was the “File Upload” feature: an application is able to upload a file, and then perform a geoprocessing task on the uploaded file. The demo for the file upload was pretty slick; it involved uploading geo-JPEGS in a zip, then creating a temporary feature layer with the image attached to the points.

Finally, a quick note about dodge ball—we lived by the ball, and we died by the ball. Hopefully next year’s recruits can make it to the championship.

Mike Haggerty

Today started off with a keynote presentation by Steve Riley, CTO of Riverbed Technology. He gave an engaging and provocative talk about everyone’s favorite buzz word: The Cloud. He started out with a good working definition of the cloud: “if you’re still paying for it when it’s switched off, it’s not the cloud.”

Steve went beyond the marketing fluff and gave us some categories and terms to help us ask better questions about cloud-based technology. It is imperative that we begin asking these questions, because according to Steve, we must “adapt or die.” To really drive that point home, he showed a photo of an electric turbine in a Belgian museum. The turbine was used to generate power for an adjacent brewery. But eventually, the brewery was connected to the public grid, and they had no use for the turbine any longer. Steve asked, “What happened to the guy whose job it was to operate and maintain that turbine?” Well, he either went to work for the utility company or he retrained to operate brewery equipment.

We can’t be afraid of The Cloud, but we must adapt or die. That is the story of our lives in the tech sector I suppose. I walked out of the keynote talking with Dan about punch cards…

Since I’m a developer and not a system administrator, I’m not too terribly afraid of The Cloud, as I don’t really care where my code runs. I am, however, more than mildly curious as I survey the RIA landscape and try to determine which client technologies are going to be waxing into and waning out of favor in the next few years. I currently program in Flex, but I’ve attended a few HTML5/JavaScript sessions to brush up on the latest in that space. I think Mansour Raad gave a good, balanced perspective in his HTML5 for RIA developers talk yesterday. He doesn’t think Flex is going anywhere any time soon, but with Microsoft, Adobe, and Apple all promoting HTML5, we would be fools to ignore it.

In that vein, I attended Jeremy Bartely’s session about building a large JavaScript application. He shared many lessons learned in the last three years of developing ArcGIS Online. One challenge they faced was creating a stateless application that had to store user interaction history – for that they ended up using the Persevere Framework. In addition, he shared how to make a single page Ajax application crawlable, why they used Dojo widgets (ease of localization and accessibility), and how to fallback when browsers don’t support certain HTML5 features. One of the slick HTML5-enabled features he showed was being able to drag and drop a CSV file on top of the web map (this has been around for a while, but I just learned of it today). He showed how that file would be opened using the FileSystem API, the data used to create graphics, and the graphics displayed on the map.

In his session entitled “Advanced Flex API Development”, Mansour showed how HTML5 and Flex could be complementary. Though the Flash Player can’t access the local FileSystem via drag and drop, with a dozen or so lines of JavaScript, you can handle this in the browser and pass the data into the Flash application via the External Interface.

Change is afoot in our industry, but that’s really always been the case, hasn’t it? At GISi, we plan on adapting.

Steve Mulberry

Day 2 started off with an entertaining keynote from Steve Riley, technical leader at Riverbed Technology. A couple quotes stand out, such as “adapt or die” as it relates to using the cloud. An interesting statistic he shared was that virtualization is vastly out-numbering physical machines they run on. Another quote, “Systems Administrators will become developers…” With the ability to standup, manage, monitor, and scale systems in the cloud literally through scripting processes, sys-admins no longer need to unpack computers and go through the rigors of standing them up on premise. Steve did his best to distil the cloud non-secure myths and concerns, stating that in his opinion the cloud is probably more secure than any internal IT environment.

Securing Applications at 10.1

ArcGIS Server 10.1 introduces the new feature level edit and ownership base access controls. Through the web API’s, developers will use a new Identity Manager class to automatically manage user access at the feature level by the owner of the feature. These new controls add the ability of tracking time and date users edit features, either at the desktop or over the web. The new web adapter adds a new layer of security and replaces the need for a reverse proxy configuration, as well as taking the place of ArcGIS Instances prior to 10.1.

Esri is trying to address the number one idea submitted to the Esri’s ideas web site, which is the need for high quality printing over the web. Their initial response is to offer a 3 tiered approach.

- Simple

- Custom Configuration

- Advanced Scripting using ArcPy.Mapping

The idea is for the developer to use one of the API’s to access the WebMap control and use the new ConvertWebMapToMapDocument method, make any adjustments to the MXD, and then export to PDF. The WebMap will hold all the definitions for each layer or graphic added to the map. There will be a new printing widget available for each API that will be used to construct JSON for communicating to the new print service. You can read more about it here.

Caleb Carter

This morning the main event for the developer summit was the first topic of discussion. I bet you think I’m talking about the keynote, but I’m referring to the Dodge Ball tournament. It seems that there were a couple of open slots left, and so the audience was encouraged to recognize the vast similarities between Dodge Ball and a host of other sports. Once our mindset had been properly adjusted, the keynote was introduced.

Steve Riley joined us at the summit to talk about the cloud, how to develop for it, how to administer it, and how to secure it. I sat back in my seat and prepared to take rapid notes on the nuts and bolts of getting going in the cloud. What we got was something different altogether. First off Riley set the stage by illustrating the human tendency to fear change and to predict outcomes based on those fears. He then made a bold statement (maybe even crazy given the audience): “Location doesn’t matter.” What? Wait…does he know where he’s standing?

Well I think he did know very well. He was standing in a crowd of technical professionals, many of whom are fairly fond of seeing, housing, and having physical control of their own servers. And he was hitting home the point that physical control over our servers is not all it’s cracked up to be. He wanted to put our minds at ease in terms of this mindset of “if it’s here, then it’s mine, and it’s secure”, whereas “if it’s not here, then it’s not mine, and it’s not secure”. I personally haven’t had this sentiment in relation to the rise of the cloud. Honestly I would have said that my lack of concern is due to naivety and a lack of deep understanding of server infrastructure security, but from now on I’ll be claiming that I knew all along that this cloud thing was legitimate (keep that under your hat please).

In the end Riley made a compelling and logical case as to why under the right circumstances the cloud can be more secure than maintaining your own servers, with dramatically less time and effort spent. I went into this session expecting to come out with a pile of new information and knowledge about how to work in the cloud. What actually happened was that I came away wanting to set aside some time to try out the cloud. In my mind the inspiration to try out something new is far more valuable than knowing how to do it. That inspiration will drive the learning.

It was a great presentation—engaging, on point, and relevant.

Then came more technical sessions…

ArcGIS for Sharepoint

Esri provides a configurable and ready-to-deploy Sharepoint extension based on their Silverlight capability, which I learned a little bit about yesterday. The extension to Sharepoint consists of three basic parts, a Map web part, a Geocoding workflow, and a Location field type. With these three capabilities, Sharepoint lists or libraries, which include address information, can come to life very quickly and easily in the Map. Here’s a quick rundown of the capabilities (some of them at least):

- Add point location fields to any Lists or Libraries that contain address information.

- Load ArcGIS Server and ArcGIS Online maps to the web part.

- Select Sharepoint items in the map and the corresponding item is highlighted in the list, and vice versa.

- Generate locations for Sharepoint data using the Geocoding workflow.

- Interactively select the correct address match from the workflow results – this was particularly slick.

- Move the pin location in the map to fine-tune the location of a list item – also very cool!

- Configure any available locator (even custom locators).

This capability is very tightly integrated, and all of the use and configuration of the web part and workflow is available right in the ribbon, like it was made for it J. And if the out-of-the-box capability, as great as it is, is not enough for you, the web parts can be extended using the extensibility SDK for Silverlight. Extension can consist of Tools, Behaviors, Layouts, or custom Controls.

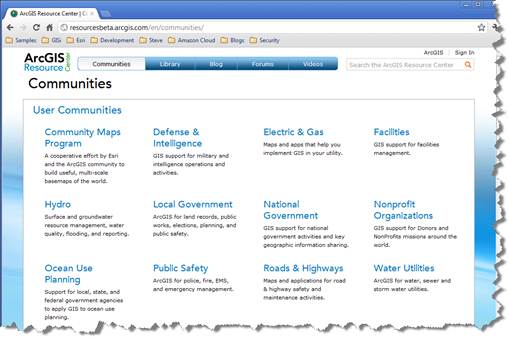

It’s pretty cool stuff, and best of all there’s a pretty thorough site in the Resource Center.

Introduction to MVVM and Custom Controls

This user presentation was cool. It was a short presentation that consisted primarily of a simple example of a Silverlight application, using MVVM with a straight-forward code and process walkthrough. As a newcomer to MVVM, I primarily was interested in getting the basics: what are the pieces, how are the lines drawn, and what is the payoff. I certainly got this…and I’m excited to try it as soon as I have the opportunity.

So what are the parts/lines of concern?

- The Model is the low level data access. It generally runs on the server, and is often mostly auto-generated. The interface to this conceptual layer consists of web services.

- The View is the markup (XAML), which makes up the user interface. There is little or no code behind it, and the creator of this layer should be a designer.

- View Model is the layer that facilitates communication between the Model and the View, which is for good reason “speaking different languages”. The interface to the Model consists of service calls. The interface to the View consists of binding properties, collections, and commands.

And what do we get for following this model? The ViewModel contains the real logic of the application, and it is VERY testable.

There were a couple of important points that were made at the end of the presentation to help make your MVVM adoption successful:

- Do the tests…if you don’t do the tests, you’re not taking advantage of the most important reason for using MVVM!

- If you have to put a little code behind in the view…don’t worry about it. Set your sites on the ideal implementation, but don’t let the realities of the task at hand force you to do more than necessary in order to adhere strictly to the pattern.

ArcGIS Runtime SDK for Windows Phone

I know this is going to sound familiar, but this SDK is also based on Silverlight, which we covered yesterday. The list of new features and capabilities is nearly the same, so I’ll jump right into the few things that set this SDK apart. First, the one limitation that this SDK has as compared to the other Mobile SDKs, seems to be the inability to work disconnected. This isn’t really an Esri issue, it has to do with the fact that the OS is pretty restricted in terms of access to resources, so copying data onto the device and accessing it there is not as straight forward as with other platforms.

Where I really got excited in this talk was when I discovered that the Resource Center for Windows Phone includes an emulator packed full of sample capabilities and their corresponding code snippets. Very cool! Not sure I’ll have a WP project in the near future, but the Emulator seems to be more than sufficient for further exploration!

That rounded out the second day of technical absorption. More tomorrow!

Sean Savage

So, the cloud…”adapt or die”, according to Steve Riley during his keynote address. It sounds dramatic, but he put it into context through strange, but relevant analogy, involving John Philip Sousa and a gramophone! Essentially, the point was that the cloud is changing the way we do business and will continue to do so. Those who don’t embrace the change may, well…die, at least within this career/industry. In reality, he presented the cloud from a different perspective, arguing that it could be debated that the cloud is more secure than the traditional on premise model. Likewise, the changing paradigm could inspire creativity in solutions, offering freedom and flexibility that can and will offer new opportunities. Of course, freedom and flexibility don’t mean no responsibility, and we can still undermine the cloud as a valid and robust solution with poor development, implementation, and decision making in general. It was an entertaining and thought provoking presentation, if not always politically correct.

Following the keynote, I attended a session on Agile Software Development. I am admittedly not well read in the agile approach, and as I mentioned in my post from yesterday, I have no experience to speak of. Nonetheless, I thought the content was quite good (though the delivery a little dry, which made it just a touch hard to stick with it), and very informative to the untrained ear. Unlike the agile session yesterday, this session did provide a solid overview of the agile approach and its origins. Although many of our projects on the State & Local Government side may not be justify or support team sizes that fit within the recommended range (5-9 or 6-10, depending on who was speaking), the basic approach seems like it would be a good fit for the pace and suitable to meet the needs of the clients. I thought some of the discussion could have implications on the way we approach huddles, both project and team. That is, leveraging the huddle to have each individual strictly speak to three things every day: (1) what have you done since the last huddle? (2) What are you going to do until the next huddle? And (3) what are your impediments? But, I also actually found the approach to estimation among the most intriguing concepts, such as relative estimations, wherein you identify a prioritized backlog of items (PBI) that you can digest and understand well. All other PBIs are then estimated relative to that, not by hours, but rather by complexity (i.e., 2x harder, etc.). If I understood it correctly, you can then use the hours for that one baseline PBI to calculate the estimates for the others. I have a lot to learn!

I then attended a session on Leveraging OGC Capabilities for ArcGIS Server. It was an interesting presentation on another topic that I haven’t gained much experience, but ultimately the presentation and demonstration showed mostly out-of-the-box Server administration to expose the OGC capabilities for each service type, and then consuming those capabilities with open source client applications. Like I said, it was interesting and may be applicable for non-Esri clients who want to consume hosted services (either by Esri or other parties). I need to give some more thought about how widely applicable it could/would be.

The third session of the day was on Extending ArcGIS Server 10.1 Services. With the push to migrate to SOEs as 10.1 looms, I thought this was quite a relevant and interesting session. The presenter walked through the process from writing, through deploying and publishing, to consuming a 10.1 SOE. They were quick to point out that many Esri clients and partners had developed services that may now be addressed by other features coming with 10.1, and therefore not all services may require migration. However, consistent with so many other features of Server at 10.1, the process of deploying a SOE is substantially simplified, and now completed directly through ArcGIS Server Manager. The examples were simple, but well worth the time of the session.

The final session of the day was on Map Caching in ArcGIS 10.1 for Server. Although I missed the first several minutes of the session due to a mistake in the agenda (I swear!), I walked in to see that once again the process has been streamlined at 10.1, with much greater flexibility and far more reporting. Access to cache management tools is now facilitated by context menus directly on the services in ArcCatalog. There are tools to estimate the cache size per the configuration of the service, and even new tools and data to support very detailed review of what has been cached and when. Caches can also be fired off through the Catalog window and then run asynchronously, allowing the user to continue to interact with ArcMap and even close the application and check on status later. Of course, there are also advances in publishing to the cloud and ArcGIS Online, a very consistent theme throughout!

Ben Taylor

The day began with a lively keynote speech concerning cloud development. Steve Riley of Riverbed Technology gave an inspired speech that attempted to demystify and debunk misconceptions surrounding cloud development. Steve touched on a broad range of topics, including software architecture and security.

During the afternoon, I attended technical sessions focusing on the JavaScript and Flex APIs. The JavaScript session rehashed a few functionalities new to 10.1 that were showcased in the plenary session. Network connectivity problems hindered the presentation a bit but the presenters pushed through…a difficult task considering they were demonstrating performance enhancements regarding how geometries coming back from ArcServer are now generalized based on the scale at which the request was made. The end result was a dramatically smaller JSON object coming back across the wire.

The second afternoon session dealt with Flex API. Some of the new features scheduled to be available in the 3.0 release include a content navigator, ability to cancel gp jobs, improved KML support, and editor tracking. One of the more impressive features was the new dynamic layers, which includes the ability to change layer ordering and symbology via a rest request.

I wrapped up the day with a couple of presentations that included Mansour Raad as the presenter, which is always a treat. The first session dealt with some advanced topics in Flex. Mansour demonstrated a few example applications that highlighted the benefits of using MVC. Then by combining HTML 5 JavaScript and AS3’s ExternalInterface class, he demonstrated how it was possible to perform drag and drop functionality in Flex (similar to the JavaScript API’s D&D). He wrapped up the session by showing a solution he developed that utilized NoSql and MongoDB. He showed how he extended the spatial filtering capabilities of MongoDB to include complex polygons, which can only handle simple circles and squares by passing an envelope of a polygon and clipping the results using Flex’s native point-in-polygon filter.

The last presentation featured both Sajit Thomas and Mansour. It was one of the most entertaining presentations I’ve seen in a while. They demonstrated several applications written in HTML 5 and Flex. The slide content and scripted banter between them was priceless, and kept the audience in stiches. The applications included such functionalities as voice control, an attempt at mind reading, and ending with a helium-filled shark that was controlled by a Flex app. So it was an entertaining session to say the least.